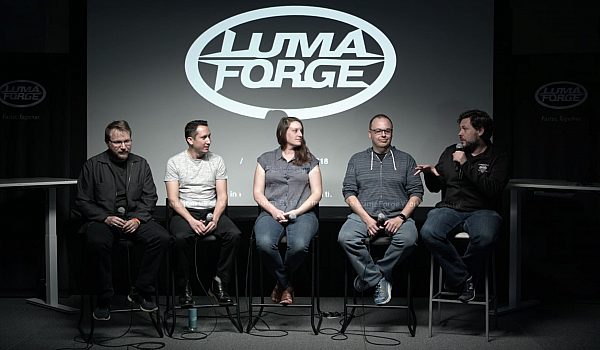

Michael Kammes, of Keycode Media, dispels myths of post production for a live audience at NAB. He talks about HDR, audio distortion, drive capacity, grading monitors, YouTube uploads & adding content to a file without a new export.

My name is Michael Kammes. Who here knows who I am? Does anyone here know who I am? Thanks mom. Thank you. Thank you. Um, I do two things. Uh, during the day. Uh, I have a mild mannered job as director of technology for key code media. And at night they let me wear a Cape and do a web series called five things. So has anyone here heard of five things? Mom? Again, thank you. Okay, so what five things is, is a web series where I cover a lot of the hot topics in the industry, but I get away from the marketing ish, right? Uh, anytime you go to a manufacturer's webpage and you look at, you know, specs and speeds and feeds, you know, it's all best case, right? And that's all what the manufacturer says it can do, not what real world is. And so what I try to do is dispel a lot of the stuff that you see out there that may not be real and explain concepts that not a lot of people know.

Beginners may not know we're at NAB, so I'm sure all of you know all this. So I expect some people to be nodding off. But there are folks who don't understand some of these concepts. Things like HDR, things like LTO, asset management, trans coding, and post tells me that gets all the women, women love trans coding, trust me. But one of the most popular ones I did is something called post myths. You know, anyone here that spends any amount of time on help forums or on Facebook groups, there's always questions out there that you would just shake your head and you do a face Palm and like, wow, people really think that and it's not the questions if people answering those questions with the most asinine answers, right? So you almost have to, you know, correct. The correctors. And so what I did with this episode was take some popular myths that I've seen in post production and trying to steal them down to the basics.

And so I thought I'd go through a few of those with you today. Sound good? Yeah. Okay. The first one is transcoding to a better codec will improve quality, right? How many people have shot on a GoPro and someone says, just make it into a program and it'll look better. Okay, we'll cover that. A log formats are the same as HDR, right? A next is you can grade video on a computer monitor. I've actually seen fights break out when I've presented this before between computer and video people. It's pretty interesting. Nerd fight, you can fix audio distortion. As an audio guy, this is one of my favorite things to talk about. Um, storage is the same as capacity or marketing versus engineering. Uh, and then if we have time, I have two more to go over and that's that. Uh, you need to create a YouTube format if you want to upload to YouTube.

And the last one is picture or audio changes require a new export. Like if you mess something up in your timeline, you have to re export the entire thing. It's not exactly the case. So let's get started. First I asked this question on Facebook and Andrew Webb said, yes, changing eight bit to 16 bit. We'll give you more color. Also changing for two Oh two four four four we'll give you more color and also dropping the project hard drive. We'll make the pixels blurry, but I'm not going to cover that one. So the things that we're answering in this myth is a well we to improve visual quality, right? Why would we trans code while we want to improve it? Um, it's easier for the computer to use, right? You've all heard that working with compressed media like two, six, four or long GAAP footage doesn't play well with CPU is we need something that isn't long GAAP or compressed.

And also I read on a forum that I should use it that's popular on creative cow. So, and this gets into some really nerdy stuff, stuff that you know, when you want to clear a room at night, you bring up this stuff a bit depth chroma, sub sampling or chroma sampling, trans coding and dithering. These are words that don't come up in typical conversation except you're at NAB. Of course. I'm hopefully everyone who understands the difference between 10 bit and eight bit. Right? And majority of the cameras are out there shooting eight bit, right? So the higher ones are shooting 10 bit or better, but if you actually compare them, eight bit only has 256 colors, right? The RGB sampling, if you do 10 bit, that's 1024 colors. You can kind of see the banding through here, right? It's 75% more info. So it would make sense in some warp logic to say, well, if I gave something 75% and more quality, it would look better, right?

Well, that's when we get into sampling, right? I'm not going to bore you with a dissertation on a chromo sub sampling, but this is how, what? Four one one four two Oh four two two and four four four means it's basically sampling an area of four pixels and sampling the amount of pickles within that pixel that come up with a round number. Not going to bore you with it. Here's a better way of phrasing it. This is the 2000 democratic presidential primary notice I didn't do last year because that's still a hot topic. I'm not going to touch that, but I could sit here all day and read you the results from each polling place, right? And it's boring as hell. Wouldn't it be a lot easier just to say that? Right? And that's basically what chroma sub sampling is. It's saying, you know what, I don't have to give you all this information.

I just have to give you this. Alright, here's another way of looking at it. Let's say you pour yourself a drink when you get home, right? Whatever that drink is, it's completely up to you. But there's your glass, right? But you say, you know what? I want more. I want a bigger glass. Okay, you're not adding anything to that. All you're doing is putting the same amount of info in a bigger bucket. So what that means is you're eating up more storage space, you're getting up computational time, and you're not getting any more quality. So why is it mostly false? And I'm surprised Gary or someone here isn't waiting to go, but, but, but how many here are familiar with dithering? Few people. Okay. Let's say you shoot for two Oh four K UHD, but your deliverable is HD. You can dither down from four to O, F of four K down to HD, four to two.

Because remember when you quote, when you do chroma sub sampling, you're pulling the average between those pixels, right? So you can actually increase your color space by decreasing your a raster size, right? So that's the only time you can really add more information to it. So you can certainly give some shot. You can see this actually, I think I have a bigger picture here. You can see here on the right hand side that's a lot smoother than the other ones. And that's dithered from UHD for two Oh two full HD four four four. Does that make sense to everybody? Yeah. Okay, cool. All right, let's move to the next one. Jason Bodog. Friend of mine, shooting log does not give you more dynamic range and I kind of altered that to log formats are the same as HDR cause HDR is a hot buzzword. I want to shoot HDR.

Okay. So this deals with a couple of different points regarding color. In video a, we deal with dynamic range. So that would be SDR, standard, dynamic range or HDR, high dynamic range. We're also dealing with various log formats, right? Every camera manufacturer has their own log, right? Cause they're artists. Also, I read on a forum that I should use it, right? How many people have said, well this they recommended on the forum that I should shoot log in and they have no idea how to use it, right? That's when you get some funky resolve lots right there. Yeah. So here's a good way of thinking about it. When you shoot traditional video cameras, SDR, you get eight to 10 stops of dynamic range, right? Like when you walk into, let's say your bedroom at night and the lights are on, you turn the lights off, the room's dark.

But after a while you can kind of see, right? Cause your eyesight's adjusting to those eight to 10 stops within that, that visual range, right? So what we want to do with high dynamic range is to actually get more in that space. All right. Here's another way of looking at it. This is a beautiful picture of a sunset, right? When you use a camera with standard dynamic range, you get that right? It gets blown out. Do you see that? What we want to do is recapture that so we get all of this. That's where high dynamic range comes into play. Now, if we take a look at HDR on scopes and SDR versus HDR, you can see that the log image on the scopes does not expand a over the full brightness range, right? So log is not giving you that HDR, which I know there are different standards with HDR, but it's traditionally over a thousand nits that's not hitting over a thousand nits on the scope.

So it's mostly false. The exception is that log will not give you HDR, but you can shoot log in HDR when, uh, when all your gear is HDR. Does that make sense? Yeah. Okay. Moving on. Maybe wide color grading on a computer monitor is different than color grading on a video monitor. This is big for independent editors who don't want to buy a video monitor, right? Perhaps a in LA we have a lot of assistant editors who may be cutting out of their house and they're doing stuff that's going to broadcast. Well, uh, how can you check the colors, right? Especially, especially if you're doing grading. Um, and the answer I hear all the time is, well, it's close enough. Okay, well how about next time we're in the edit Bay and your director wants to change a frame, you know the frame effing right? And you say, no, no, it's close enough.

That doesn't fly. No, they're going to be messing with that frame all day long. So the same can be the same as for color, right? If we're going to all that trouble and all that budget, why would you want to view something on something that's substandard? Right? So this deals with several things, deals with gamma curves. Again, boring a, it also deals with computer emulation and monitor emulation, which we'll get into in a second. And also various display technologies. So the traditional color space to the computer, and I say traditional because there's all sorts of things out there. Is SRGB for, for HD video. It's rec709 right? And you can see as you look here, there, they're pretty close, right? When you zoom in closer though, you can see that there is a difference. It's slight, but it's different, right? And for those of you who really like charts, I've got this which shows all the colors, spaces mapped into one and they're all close, right?

For the most part, they're all close. But as I said earlier, close isn't good enough. You know, not only do we have to deal with the different color spaces, fundamental differences, but we also have to deal with the computer that's talking to that computer monitor, right? How many of you have seen the window on the left on your Mac? Right? The computer wants to manage the color that's being output, so now you're dealing with not only the color management that the monitor has, but also the color management that the computer wants, right? Windows has the same thing, right? On the right hand side, that's windows 10 you also are dealing with different monitors, right? We have aging plasmas and believe it or not, people in LA still have a ton of plasmas. Uh, we have LCD, we have the TFT and IPS. Uh, did anyone see the new IPS panels this year?

No. Okay. Well, uh, LCD, you're familiar with LCD, right? There's always backlight. Even if you're showing black through there, you still have backlight. They now have a new panel that puts an extra layer in between there that when black is shining through the pixel, uh, through the, uh, through the LCD, it actually puts a blinder in front of it. So you're getting true black. Yeah, it's, it's really interesting. So now LCDs are going to cost them more than OLEDS. Go figure. Uh, we also have OLED, you know, black is the new black. I think it's a coolest tagline I've ever heard and projection. And if you really want to get into acronyms, there you go. LCD is liquid crystal display with thin film transistor and implant switching and organic light emitting diodes. I will be giving a test at the end. That's false. That's false.

Mainly because as I just said, they're different. The color spaces are different. And if you do a grade on a computer monitor for video, it will look different on a video monitor, which means it may get bounced back from QC. No one wants to get bounce back from QC. So next up we have my favorite one because actually before I decided to get into the zeros and ones, I was a creative, I was a post audio guy and this is the popular one. I can't, I come from Chicago and a lot of films were shot near the L tracks. If you've ever spent any time here, the L tracks, they're loud and invariably the director will call action, right when an El is passing by. So the dialogue gets all destroyed, right? So when we talk about audio Adam Bedford brought this up, audio modulation, you can't fix the [inaudible].

I think he meant audio over modulation, but you won't split hairs. This deals with several things. Repair tools like you'll find in your NLE or plugins. It deals with the black art of post audio. I still like to think that that's kind of a black art to some people. And also I can't afford reshoots or ADR or I just don't want to deal with the stress of reshoot through ADR. So I'm going to go back to this analogy again, just like with video, with audio, this is the audible spectrum, but quite often you're using microphones that can only capture this much, right? So the signal gets blown out. Here's a good example. There's what you hear it in the wild, but when you capture it with a poor microphone, you're only getting this much right? So everything above and below in terms of amplitude is lost.

You can't recover that. So what traditionally happens is that repair tools, guess what should be there. They see what's going up on what's coming down and they say, well, given you know, the, uh, the trajectory and how long it's been doing this, we're guessing it's going to be at this point. That could be completely wrong. You often lose body or warmth. It becomes what they call crunchy, right? And a, this goes back to one of my favorite sayings, it's, there's never enough money to do it right? But there's always enough money to do it. Again, so if the dialogue doesn't come out right, well I guess we are going to have to do ADR after all. So audio over modulation, you can't repair, but you can try to put a bandaid on it and usually that means putting the music a little higher, putting the background effects up a little bit more, doing stuff to kind of hide it.

So it's false. This is my favorite one. It's also the most boring one because I think I'm the only one in the world that gets up excited about spindles and codecs. Right? So spindles a storage is the same as capacity. This deals with raid requirements. Again, no one really likes talking about RAID although I think some LumaForge people here may disagree with me on that one. Uh, marketing versus actual, uh, which actually is a base two verse base eight math. I swore I would never use a phrase like that in my life, but here I am talking about base levels of math and then OS requirements. Okay. Everyone here knows what a rais is, right? Right. Okay. But I think what I may do is skip over a little portion of this in the interest of time, but RAID obviously is redundancy, gives you a faster speed.

Usually it gives you more protection in case a drive goes South. Uh, it also increases what they call the MTBF, which is the meantime between failure. This means that the chances of a drive failing are a lot sooner when you have multiple drives compared to one drive. Um, we also deal with software rates versus hardware rates. I have the 60% rule and storage, right. And, and uh, Patrick, you can certainly use this at LumaForge, but I expect royalties are, the patent is pending. So how I learned to embrace marketing, math and performance. Okay, so you go to the store and I know drives are a little bit bigger than one terabyte now, but indulge my math here. Let's say you buy a one terabyte drive, you never get one terabyte off the bat. Why is that? Well that's because we're dealing with base eight math for space, two maths for doing with kilobytes, megabytes and megabits, which are multiples of eight as opposed to zero one, two, three and four.

When you add all that up, you get a 7% loss off the bat. Once you pop that drive in your machine, most drives that adhere to this marketing versus engineering spec, you'll get 930 gigabytes. Now we have raids, and I know people are gonna call me out on this. Raids, as I mentioned, give you redundancy. Depending on what raid you go with, you lose different amounts of storage to that protection, right? If it's four drives, you're going to lose one drive, but if it's a a hundred drives, you're not going to lose as much storage. So what I did is I looked at all the different storage's units that I dealt with and how much am I traditionally losing with raid five comes out to about 15% okay? No one's going to arm wrestle me on that. We're good. No. Okay, cool. So we take 7% from that a one terabyte, we then take another 15% from that terabyte.

Next, we have best performance buffer, right? How many of you have always been told, Deborah, fill your drives up, right? Especially spinning drives because performance isn't linear, right? Performance does that, right? We're in the age of um, a, a solid state. Now where this is less of an issue, but still most storage arrays are based on spinning disk because you get more capacity, right? So once you add all these up, this is what it looks like. 7% lost another 15 another 2,632 gigs of usable space. And those of you who are thinking that math is off, trust me, I'm taking 15% from that 930 and I'm taking that 20% from that. Uh, I think 790 gig Mark. So that means you're getting only about 40%, maybe a little bit more of the storage that you think you're buying. So next time you're talking to a client or you're looking for a storage yourself and you say, well, I think they'll probably shoot. I don't know, maybe with terabyte of storage probably should be getting two drives, not one.

Okay. This is my favorite one because it's just a duh moment. Uh, you don't want, a few minutes ago, I talked about eight minutes versus 10 bit and how compressed, et cetera. Well, when you compress a file and then recompress it and recompress it, it's gone like the old days of tape, right? When we make a doop, you make a doop, you make a dupe and suddenly you, it's, it's fuzzy. You can't tell what it is. The same thing happens with YouTube. If you cut something in DNX Perez sent a form, or maybe you already have an H. dot. Two six four from your GoPro, and then you transcode that to a YouTube preset inside PR, a Adobe media encoder or compressor, right? Then you upload it. Do you know that YouTube is compressing that again? Right? Everyone knows that they're also putting a compressor on your audio. Why help them out.

If they're already going to compress your stuff down, why, why? Why not? Just give them a high Rez file. If you go to their recommended specs, a page settings, they have a page here which recommends your bit rates. Now, when I first came up with this a presentation, Google used to have a document that said for enterprise accounts you can upload pre-reads, et cetera, et cetera. That document is now gone. What you can actually do is upload Perez, so what I do with five things is I do my edit, I export a mezzanine file. A pro is for two to which queue, and then I upload it. Yes, I need to leave for a couple of hours when I uploaded, but I upload that and YouTube will flip it. YouTube will create their file out of that. I can also do send a form. I can also do DNX.

Now, right now I'm doing DNX HD at last check. They don't, they don't handle DNX HR yet, but it's going to take a while and here's the math. If, as I mentioned, I do a pro Rez for two to HQ at Trinity P, that's about 1.5 gigabytes a minute, right? In terms of how big the file is. Uh, my upload speed at home is 1.5 megabytes a second. So let's about 16 minute per gigabyte. That means for a 10 minute episode of five things, it takes 2.6 hours. So if you have an average home connection, um, and you want to do this, it's fine. It's just gonna take a while, but you will see a quality difference, especially in the banding, uh, among gradients. So that's false. The last one is war based around a cool tool, uh, that I've worked with. Um, let's say you created a, you finished an hour long program and you will like me effect fingers and you fat finger lower third, you type someone's name wrong, right?

What does that usually mean? Usually means, Oh man, I gotta now babysit a new export, right? You've got export the entire thing. That's not the case. For years, we've been able to use QuickTime pro, right? And just swap out audio tracks and rewrap it right without retrans coding. It's a cool little hack. But as we know, quick time is going the way the Dodo is a being phased out. So there are other ways we can do that. And that's why there's a tool called city X insert. No, they are not paying me for this. Uh, they have a free version that just got announced. And for those of you, how many of you have worked with tape? Okay, we do have, we do have some folks here. Okay. So whenever you wanted to do a punch in on tape, right? I'll get you to do is set your in, point your out point, you punch in, insert editing.

You can do the same thing with digital files. Now with city X insert, you can punch into DNX pro Rez, almost any file out there and just replace that section. So you can not only fix mistakes, but what a lot of folks in LA are doing is they'll pre black an hour long show and then just punch in every time a segment has done. So that means at the end, they don't do a complete output. They're only outputting that one section when it's done. And I put an asterisk next day or next to the price down there because they just released a free version that handles a few formats and they get a handles pro, Rez, VBR and CBR, I think, and a couple other formats. So I think it plugs into both avid and premiere. So you could download that and check that out. And here's just the interface, but you have your file, you want to, uh, cut into insert into on the right and the new section on the left, and you just punch in dead simple. And those are post myths. Uh, my name is Michael Kammes and please check out the website view, like, and share often.

Mobile

Mobile

Tower

Tower

R24

R24

Builder

Builder

Manager

Manager

Connect

Connect

Kyno

Kyno

Media Engine

Media Engine

Remote Access

Remote Access

Support

Support