Keenan J. Mock is a Senior Media Archivist at Light Iron in Hollywood. He has made archives for over 200 feature film and television projects. Keenan discusses Hero Checksums, MD5 vs xxHash, and how to battle entropy (data loss) when creating your media archive.

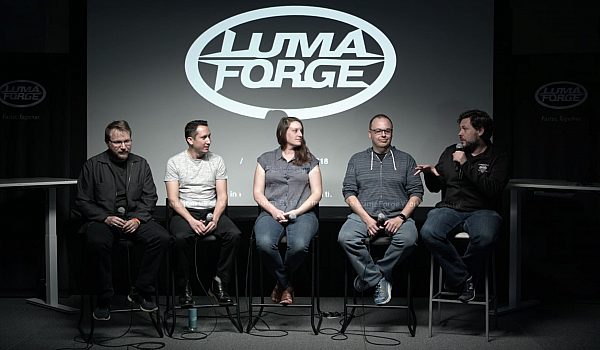

- Thank you, so I'm Keenan Mock. I'm the senior media archivist Light Iron. I'd like to thank Luma Forge for inviting me to speak today. I titled this Media Archivist vs. Entropy because I think that it's interesting that when you kind of think about it, an archivist's core job is to battle entropy, which is the gradual decline into disorder.

Whether you're a media archivist or you're a physical assets archivist at the Smithsonian or at a local library with the Dewey Decimal System, you're always trying to create a system of checks and balances in order to alert you to any disorder. Now, archiving is obviously a very broad topic, but today what I'm gonna try to do is outline a few general principles that when followed will guarantee the validity of any archive and gives you the best tool set to balance entropy.

Now these principles work small scale, smaller productions, huge, giant productions, anything in the middle, it'll work for camera negative, like what I'm gonna use in my example today. Work for finished files, vacation photos, really anything you got. So really quick, a little background on both Light Iron and myself. We are Panavision's Dailies and Finishing Service's arm.

We've got multiple locations across the U.S. Personally, I am based out of our L.A. office. And we support worldwide productions through all of our services from these facilities. Those services are dailies services, where we'll do customized workflows for facilities-based or near-set-based dailies workflow. We'll create a custom lot with your final colorist. We have offline rental space in New York and New Orleans. We've got turnkey services available in other cities as well.

As well as episodic and feature finishing, 2K UHD, 2K, 4K, 8K HDR, Adobe Vision, IMFJ2K, DCP Packaging, we've got it. We've got realtime collaboration between our facilities. And our theaters are available for private screenings. And, of course, archiving services. We provide archiving with all our previous services. That can be disc or tape or cloud-based archive. We'll also work with you to help migrate any existing archive into a meta-data-rich cloud archive, and we are a Netflix-preferred vendor with their content hub archive. We've been having a close collaboration with them.

We've actually one of only a very small handful of companies that has been greenlit to do OCF upload into their content hub. Personally, myself, for my role is as a senior media archivist, I oversee the worldwide archive and verification, and I've done over 200 feature films and episodic seasons in the past six years. This includes camera and sound negative from set, from production, as well as any other proxies and stuff created during the production into the archive, pulls from that archive for the effects pulls and conform, and final-color DI assets of these projects as well. These are some of the titles we've worked on the past few years. Now, this talk may get a little bit nerdy, a little technical.

Somebody out there is probably going to tune out. But what I'm hoping to do is deliver this so that everybody from DITs and archivists, all the way up to content owners and producers can understand it, so if you don't understand any of the tiny pieces, that's okay. I'm hoping to present this in a way that's a little bit bigger as well, so that you can understand kind of the general philosophy. And again, this works for all scales, all data types, you know, disc, feature, cloud, et cetera.

You can tell you've got a good archive based on how confident you are you can delete everything not in your archive, right? So in order to really have that confidence, you need two different things in your verification workflow. Both are pretty straightforward, and I'm gonna use an example to illustrate these. And if you can achieve both of them, you can guarantee the validity of your archive. The first is data integrity verification. And the second is what I call omission verification. I will explain these.

So let's go through a workflow, and I'm gonna use camera negative for my example. But again, for anything. So shoot on the camera, offload to a RAID from the magazine, and hopefully your offload was done with a utility such as one of these that will create a checksum, a little digital fingerprint of your file. And you can have any number of copies. You can have your onset RAID copying your shuttle drive, shuttle drive copying to a near-set RAID, where your dailies are created.

You can have any number of copies before you ultimately you write into your archive, which could be a tape, could be a master and protection set of tapes, could be some sort of cloud storage, Glacier S3, whatever you've got, could be disc if you really want, but for the purpose of this discussion, I'm just gonna represent the archive, whatever that is, with this tape. And if it is a tape, then, in fact, that last copy can be used as a manifest for listening what's on it.

So you've got all these different checksums all along the process, but in order to verify the archive, all you really need to do is compare the latest versus the earliest. And, of course, everything in the middle you know is still okay. Now, you see I've labeled this as the "hero" checksum. This is an important concept at Light Iron. A hero checksum we define as a checksum generated as early as you possibly can, so in this case, the media was offloaded, a checksum was generated during that offload, and that's referred to as a hero.

If this checksum didn't exist and only the second one did, we would still verify against that because that's the earliest that happened, but we would not consider that a hero checksum. If this magazine was offloaded using a find or drag and drop and then after that a checksum was generated, that would also not be considered a hero checksum. So a hero checksum is this idea that a checksum is generated the earliest you possibly can. And then we always try to verify against that, of course. So the earliest isn't necessarily a hero, and the latest isn't always your write, your record of your write into the archive.

For example, if you did write LTO, and you ship your LTO to a facility or some other location, you would then need to rescan the archive, generating new checksums to make sure nothing's changed and verify against your hero. Now, real quick, I wanna focus on these checksum files here. As you probably know, not all checksums are created equally. Come in all sorts of different flavors or algorithms. So, for the longest time, MD5 was the standard. And it was really the only algorithm that was used to create data-verification checksums. And recently, xxHash has come out. DITs and others began using this but weren't necessarily aware of the rest of the process down the line.

They were just focused on verifying their own archive. So when we were trying to verify the archive later, it wasn't matching it against the xxHash. So we, of course, have to pivot, and as long as you've got the same algorithm all the way through, you're gonna be okay. So if you just talk with the rest of the chain as you're getting set up, so that everyone is aware which algorithm it's using. And that'll give you the most efficient strategy to making sure you're got your data integrity. So, again, that's data integrity verification. And real quick, because xxHash has become so popular recently, I wanna talk about it really quick against MD5. Now MD5 is MD5 is MD5 is MD5 is MD5.

On any system or implementation, if you run an MD5 on it, you're always gonna get back the same string, assuming the file hasn't changed, of course. Now, that's not necessarily the case with xxHash. With xxHash, if all that is referred to is xxHash and then the Hash string, that could actually be referring to one of two different algorithms, xxHash32 or 64. And I believe they're thinking about doing a 128 as well. It's a newer algorithm; it's still in development.

So, to start with, it can be 32 or 64. Another thing that's different between xxHash and MD5 is that the output requires and coding before you can save your hash string, and there's different ways of doing that in coding. There's little endian and big endian. So, there's two different ways you can encode your hash string, and separately, there's even a seed value within the algorithm that could be any integer you specify. That can be different. So you've got all these different variables, all of which generate different strings, and all of which are technically valid xxHash checksums for this same file that hasn't changed.

So, if you're gonna use xxHash, you just need to be aware of these variables ahead of time. Now, that all being said, there is a standardization effort in progress that is focusing on this 64 big endian seed 0. The utilities I mentioned earlier all use this, I believe. But again, just so you're aware of this as a potential pitfall. You just need to, you have to make sure that you're using the same checksum algorithm all the way through. So that's data integrity verification. But if you don't have any data, then there's no integrity with which to verify.

So that gets us into the other thing, which is omission verification. It's pretty straightforward. You get a directory listing of the RAID that had everything on it. You get a directory listing of your archive. And you just go through and you make sure all the files are there. It's more or less straightforward, but if a file isn't there, you're gonna wanna be alerted about it, and checking hashes all day long, you're never gonna notice this. Now, if your files are also there, you can also run into an issue if your directory structure is different.

This happens pretty commonly. I think we've all done this. We'll make a copy of our vacation photos or whatever, put it on another drive, and then we'll change our structure over here, and then we can't go back and find what we were looking for later. We always wanna say that if once, you know, you write your archive, you don't wanna change your structure at all, because when you're trying to verify, you're gonna run into some issues. So, that's pretty straightforward.

That's the omission verification part. And so, if you put everything together, the full picture looks like this. You got the existence of a hero checksum. You're verifying against it, all the way through. You've got your omission verification, making sure you have all of your files. And again, this can be, this was camera negative, but you can replace these with whatever your files are, whatever your archive is, whatever your workflow is. Same principles will apply to whatever you're doing.

Wrapping up, we have a kind of a saying at Light Iron that not all post is created equal. So, when someone tells you that something is verified, what does that mean? Does their verification workflow include both data integrity and omission verification? Do you know that the same hash algorithm was used all the way through? Did they just check at the last step, a different algorithm at the beginning, and they didn't decide to go back and reverify everything? And was there a hero checksum they were verifying against the entire time?

And this is probably one of the most important ones, is the hero checksum because if you don't have any of these other things, if you have a hero checksum, then at any point in the future, you can always go back and verify against it. Now, I'm not doing the world's archiving, but if I was a producer or a content owner, these are the concepts that I would wanna be familiar with, and these are the things that I would ask my post-house about.

Because if you can create a system that adheres to all of these principles, then you can guarantee an archive, that has effectively defeated entropy, at least until the sun expands. So if you have any questions, my email address is here, Keenan dot Mock at Light Iron dot com. I'll be around afterwards as well if you have any additional questions. Thank you.

Mobile

Mobile

Tower

Tower

R24

R24

Builder

Builder

Manager

Manager

Connect

Connect

Kyno

Kyno

Media Engine

Media Engine

Remote Access

Remote Access

Support

Support